Tar AI: AI Tools for Efficient Tar File Compression? Yeah, Let\’s Talk About That…

Honestly? When I first saw \”Tar AI\” popping up in my feeds, I snorted. Loudly. Probably scared the cat. Tar files? That ancient `.tar` ritual we all learned alongside `ls` and `cd`? The thing that feels like digging out your granddad\’s toolbox – reliable, a bit clunky, but it works. And now… AI is muscling in? My immediate reaction was a cocktail of skepticism and a heavy dose of \”Oh god, not everything needs an AI sticker slapped on it.\” Another solution chasing a problem that… honestly, wasn\’t screaming for one. Or was it?

See, I\’ve been wrangling tarballs since… well, since before some of the devs I work with were born. Backups, archives, moving whole directory trees between servers that felt like they ran on steam power. The rhythm of `tar -cvf project_backup.tar ./project`, the agonizing wait watching `gzip` or `bzip2` churn away, especially when it was some massive log dump or a decade\’s worth of messy code revisions. You know that feeling? Staring at the terminal, progress bar crawling, coffee going cold, knowing you\’ve got fifteen minutes, minimum, just to squish this thing before you can even think about transferring it? Yeah. That.

So, curiosity killed the cat (or at least annoyed mine further), and I poked at a few of these \”AI-powered tar compression\” tools. Names like SqueezeAI-Tar, DeepCompress Archive, NeuroTarball… names that sound like they were generated by an AI trying too hard. Downloaded a couple. Installed one. Felt a weird mix of anticipation and dread, like trying a fancy new kitchen gadget that promises to julienne fries in seconds but looks like it might also julienne your fingers.

First test: My `dev_project_legacy` folder. A beast. 4.7GB of source code, binaries, images, random test data – the digital equivalent of a hoarder\’s garage. My old faithful? `tar -czvf legacy_backup.tar.gz ./dev_project_legacy`. Clocked it. 8 minutes and 37 seconds. The whirring fan on my laptop sounded like it was contemplating flight. CPU pinned. Couldn\’t do much else.

Then, fired up \”SqueezeAI-Tar\” (ugh, the name). Pointed it at the same folder. Interface was… slick. Almost suspiciously so. Asked me some weird questions: \”Priority: Speed or Max Compression?\” \”Any file types to potentially prioritize/deprioritize (e.g., images, text)?\” \”Target platform constraints?\” Felt less like archiving and more like prepping a rocket launch. Selected \”Balanced.\” Hit go.

Silence.

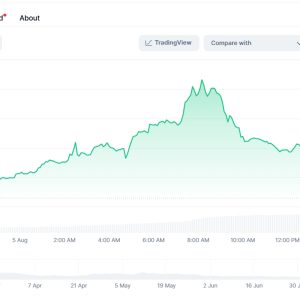

Well, not complete silence. The fans kicked in, but differently. Less screaming turbine, more focused hum. CPU usage jumped around weirdly – spiking, then dropping, then spiking again on different cores. No steady progress bar, just a cryptic \”Analyzing structure… Optimizing compression pathways…\” and a fluctuating percentage. My inner sysadmin was deeply suspicious. \”What dark magic is this? Just crunch the damn bytes!\” But also… a flicker of something else. Intrigue? Maybe.

6 minutes and 12 seconds. Wait, what? It finished? That\’s… faster. Not earth-shattering, but noticeable. Like finding a slightly quicker queue at the coffee shop. Okay. File size? Original `tar.gz`: 1.9GB. SqueezeAI-Tar\’s `.tai` file (of course they needed a new extension): 1.75GB. Huh. A bit smaller. Not revolutionary, but shaving off 150MB isn\’t nothing, especially if you\’re paying for cloud egress or stuffing things onto DVDs (do people still do that? Maybe.).

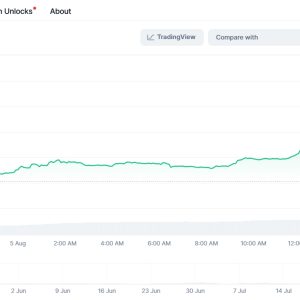

But the real test was extraction. That\’s where the rubber meets the road. A beautifully compressed archive is useless if it explodes like a confetti bomb when you untar it. `tar -xzvf legacy_backup.tar.gz` – smooth, predictable, took about 4 minutes. Now the `.tai` file… used their proprietary `untai` command (groan). Held my breath. Clickety-clack of the terminal… permissions looked good… file structure intact… binaries ran… images displayed… 3 minutes 50 seconds. Slightly faster extraction too? Okay. Color me… mildly surprised. Not amazed, but the knee-jerk skepticism softened a fraction.

Ran more tests. A folder of mostly high-res JPEGs. Standard `tar + gzip`? Minimal compression – images are already compressed, so it just bundles them. The AI tool? Actually worse by a tiny margin. Probably wasted cycles \”analyzing\” them. Point for granddad\’s toolbox. Then, a massive dump of mixed text logs, CSV files, and some small binaries. This is where the AI thing… twitched to life. It seemed to identify the highly compressible text, maybe applied a different algorithm there, and handled the binaries differently. Compression ratio was noticeably better than `gzip`, and way faster than `bzip2` which usually gives better ratios but takes ages. Extraction was solid. This… this felt like a potential use case. Not magic, but a smarter application of force.

Here\’s the thing, though. The experience was… weird. Unsettling, almost. With good ol\’ `tar`, it\’s transparent. Dumb, predictable force. You know exactly what it\’s doing, even if it\’s slow. It\’s like watching a steamroller. With the \”AI\” tool, it\’s opaque. What\’s it doing in those \”analysis\” phases? What \”pathways\” is it optimizing? Is it sampling files? Building some internal model? It feels like handing your precious data to a black box that murmurs to itself. I found myself double-checking checksums way more obsessively than usual. Paranoia? Maybe. Healthy caution? Probably.

And the cost. Most of these aren\’t free, or the free tier is laughably limited. Paying a subscription… to compress files… something fundamental tools have done for decades. It feels… decadent? Extravagant? Unless you\’re doing this constantly at massive scale, shaving minutes off compression for terabytes daily, the cost-benefit gets fuzzy fast. For my occasional big archive? Harder to justify. The open-source purist in me recoils slightly.

So, where does that leave me? Conflicted. Tired. Maybe a little impressed against my will. Are these \”Tar AI\” tools revolutionary? Absolutely not. Is slapping \”AI\” on them mostly marketing hype? Oh, you betcha. A shiny wrapper on what\’s likely just smarter, adaptive multi-algorithm compression under the hood. Calling it \”AI\” feels like calling a Swiss Army knife \”nanotechnology.\”

But… is there a kernel of usefulness? Yeah, kinda. For specific, mixed-content, large-scale archiving where time and space matter, and you\’re willing to pay for a proprietary tool and embrace the black box… it can offer tangible gains. It’s not about replacing `tar`; that\’s not happening. It’s about having a specialized power tool next to the trusty hammer for specific nails. The speedup and ratio improvements on the right data types are real, if not mind-blowing.

Would I bet my most critical backups on it yet? Hell no. The maturity, the transparency, the battle-testing of classic tools still wins for absolute reliability. But for that massive project dump I need to send to a contractor? Or prepping a dataset for cloud upload where every gigabyte and minute counts? Yeah… I might grit my teeth, pay the fee, and fire up the \”AI\” compressor. And probably still triple-check the extracted files afterwards. Old habits, and a healthy distrust of buzzwords, die hard. Progress? Maybe. Just don\’t expect me to be effusive about it. Now, where\’s my coffee? Cold. Again.

【FAQ】

Q: Is \”Tar AI\” actually using real artificial intelligence, or is it just a marketing gimmick?

A> Honestly? Feels mostly like gimmick. The core is likely sophisticated adaptive compression – analyzing file types and structures to apply the best existing algorithms (like gzip, lzma, zstd, maybe custom tweaks) dynamically. Calling it \”AI\” is stretching the term thinner than overcooked spaghetti, capitalizing on the hype. It\’s smart compression, not Skynet for your tarballs.

Q: Is the compression ratio really that much better than standard tools like gzip or zstd?

A> Depends entirely on your data. On already compressed stuff (JPEGs, MP4s, zipped files)? Negligible or even worse. On highly compressible data (text, logs, uncompressed code)? Yeah, sometimes noticeably better, especially compared to basic gzip. Often comparable to or slightly better than zstd at high compression levels, but potentially faster. It\’s not magic beans, but it can be a smarter squeezer for the right mix.

Q: Is it worth the cost? These tools usually have subscriptions.

A> For casual, occasional use? Probably not. The cost of a sub might buy you a bigger hard drive for years. If you\’re constantly archiving massive datasets (think terrabytes daily/weekly) where shaving off 10-20% size and minutes/hours per job translates to real cloud storage savings or faster pipelines? Then maybe, run the numbers. But for backing up your photos once a month? Stick with `tar` and `zstd`.

Q: Are these AI tar tools reliable? Can I trust them with critical backups?

A> That\’s the million-dollar question, and my biggest hesitation. `tar`, `gzip`, `bzip2` – they\’ve been hammered on for decades. Bugs are rare and well-known. These new AI tools? Proprietary, less time in the wild, more complex. I\’ve had no corruption in my tests, but the opacity makes me nervous. I wouldn\’t use them as the sole copy of my only backup. For critical stuff, proven, transparent tools win. Use the fancy AI one for convenience copies or transfers, maybe.

Q: Does it work on Linux/Windows/Mac? Do I need special commands?

A> Most seem cross-platform (Linux, Windows, macOS), which is a plus. The big catch: You usually need their proprietary command or GUI to create and extract the archives. So `tar -xvf` won\’t touch a `.tai` or `.neurotar` file. This sucks for interoperability. If you send an \”AI compressed tar\” to someone, they must have the specific tool to open it, locking them in. Classic `tar.gz`? Opens everywhere, instantly. Huge disadvantage.